Research Activities

Design of a new and improved SCALE2 hardware/software architecture

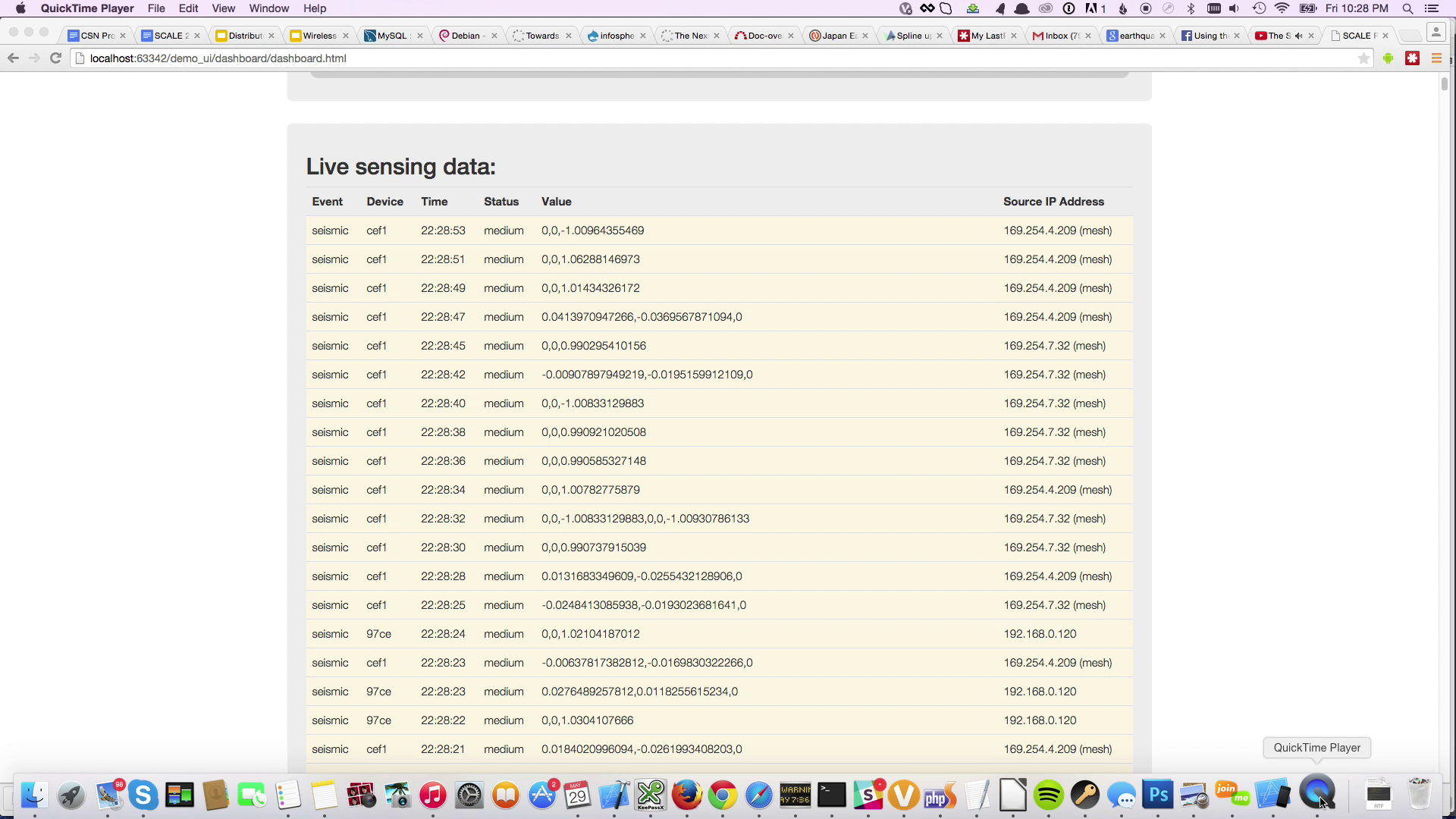

SCALE is an event-driven middleware platform where devices upload sensed events to a cloud data exchange and the analytics service retrieves these events, scan for possible emergencies and send residents alerts to confirm or reject the emergency. The preliminary SCALE system was designed and implemented in the course of four months and so was predominantly an exercise in service composition and device interoperability as we integrated devices from our various partners all in the same system. Aside from SCALE, the team also has experience deploying a local instance of the Community Seismic Network (CSN) in the UCI campus. SCALE devices from various partners have been deployed and tested in a senior living facility to experiment with various personal and community safety-oriented applications. We plan to expand the deployment of SCALE devices on the UCI campus, merging them with the CSN deployment, to conduct more experimental tests without disrupting those in active use by real people. In the coming year, we aim to address the problem of data exchange in IoT systems by proposing and developing a multi-protocol distributed broker middleware. This architecture aims not to replace existing IoT data exchange protocols, but to enable a more flexible multi-broker solution that provides a degree of interoperability between commonly used IoT protocols without forcing the adoption of a new technology. We also aim to understand how multiple sensing technologies and human provided input (wearable and mobile sensors, social media) can be integrated into the SCALE platform for improved awareness.

Extending the SCALE2 platform to handle a broader range of communication technologies

A plethora of communication technologies have been brought together to operate simultaneously and reliably and also elaborate on the SCALE2 IoT multi-networking architecture in order to facilitate communications among sensor devices. The specific choice of networking technology typically depends on the sensor application as well as the constraints of the facility in which the sensors are deployed. In most installations, the limited reach of a facility’s cabling infrastructure to the sensor installation points precludes the widespread use of wired networking technologies (e.g., Ethernet) to support the sensor network. In that, wireless seems to the most viable option. Various wireless networking options were incorporated into the SCALE2 platform such as Wi-Fi, Bluetooth and ZigBee. Wi-Fi is the most well-known wireless networking technology, but its high power profile can be a limiting factor. Bluetooth, on the other hand, has one of the lowest power profiles, yet has a limited range. ZigBee represents an intermediate solution built on IEEE 802.15.4, which incorporates a low-power wireless specification enabling mesh networking of sensor devices.3G is good for outdoor deployment where WiFi access is limited, but it is costly. Ultra narrow band (UNB) can be used for long range communication. It, however, supports only low bandwidth communication and requires additional infrastructure (such as base tower). As these examples illustrate, the power envelope and distance between sensors in a particular sensor application can dictate the choice among wireless networking options.

Resilient Overlay Networks for cloud connectivity in SCALE2

We also considered the reliable delivery of sensor data from Internet of Things (IoT) devices to distributed cloud service instances in the face of localized/regional failures. This data must be collected quickly and reliably for event detection, community infrastructure management, and emergency response. We developed and explored the notion of GEOgraphically Correlated Resilient Overlay Networks (GeoCRON) to capture the localized nature of community IoT deployments and their impact/vulnerability in the context of small failures and massive disruptions such as that caused by a large seismic event. As a focus use case, we studied the viability and utility of GeoCRON in two different community sensor networks. The first, a large participatory sensing-style network of small inexpensive seismic sensors, reports ground motion in homes and businesses to a cloud service for detecting and characterizing earthquakes. The second, a smaller infrastructure-based network of wireless devices embedded in the water distribution network, reports water pressure changes at pipe junctions that may be indicative of leaks that require human intervention. Using realistic communications network topologies derived from experiences with real world community-scale deployments and taking into account realistic deployment considerations such as population density and cost, we studied the impact of geo-correlated failures on the combined network infrastructure and evaluate a range of GeoCRON heuristics to improve communications resilience in this context. We validated the promise of the proposed GeoCRON based approach using extensive simulations and an initial prototype system implementation. This work resulted in a publication and corresponding presentation at the IEEE First International Conference on Internet-of-Things Design and Implementation (IoTDI 2016).

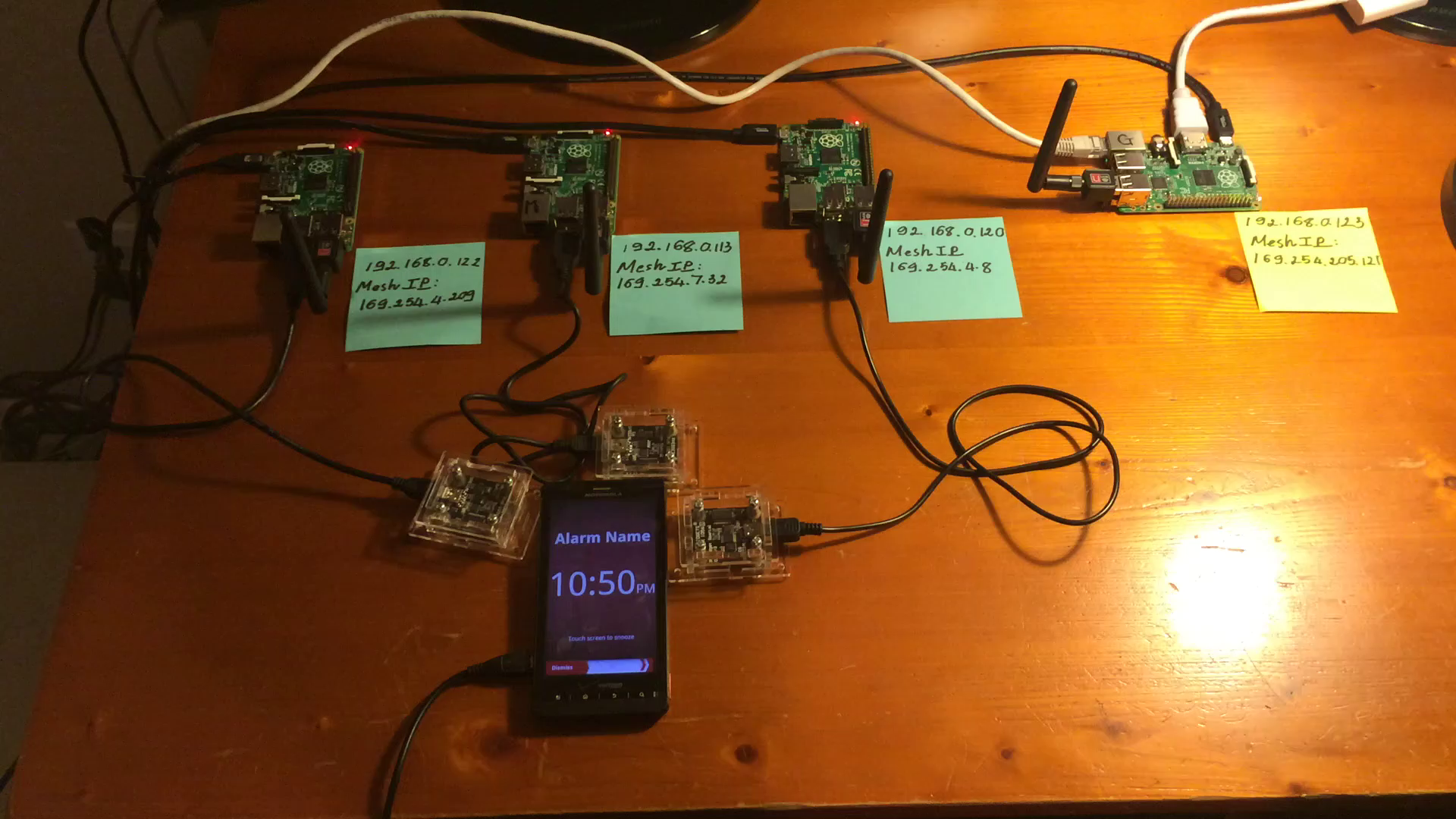

Integrating edge mesh network and client side sense-making

To address situations where external connectivity to cloud platforms is a challenge, we developed a decentralized approach with an edge mesh network in the sensing area for local detection and notification of events, mainly seismic events through community sensors. Here , instead of sending data to a remote server, IoT nodes (e.g. SCALE boxes) exchange data with each other through a local wireless adhoc mesh network and conduct their own analysis with the data they have obtained. However, due to the noise, interference and fading of the wireless communication and spare deployment (not all neighbors want to participate), we cannot completely decentralize the detection and notification of rare events. To solve the challenges, we propose a hybrid architecture in which each sensor node is facilitated with two networks: the backbone Internet and a local wireless adhoc mesh network. In this architecture, nodes organize themselves into an k-ary tree. The size of the tree is kept relatively small by limiting the number of children each node can have and by the height of the tree. This is done because it reduces the cost of constructing and reconstructing it (when required). Each tree has a root node nominated by the remote server based on its geo-location and member nodes. Each node can belong to multiple trees, and near-by trees are connected to each other by their common member nodes. Each node utilizes a fast dissemination algorithm to send its own data to other member nodes. In this algorithm, data is disseminated to all the parent nodes first and then to child nodes that have not obtained the data already (from some other node). Each node also submits its data and geo-location to the remote server that combines data from all the regions for overall analysis. When the remote server detects an event in a specific area, it disseminates alerts back to the sensor nodes in the location that have not reported any sensing data about the event.

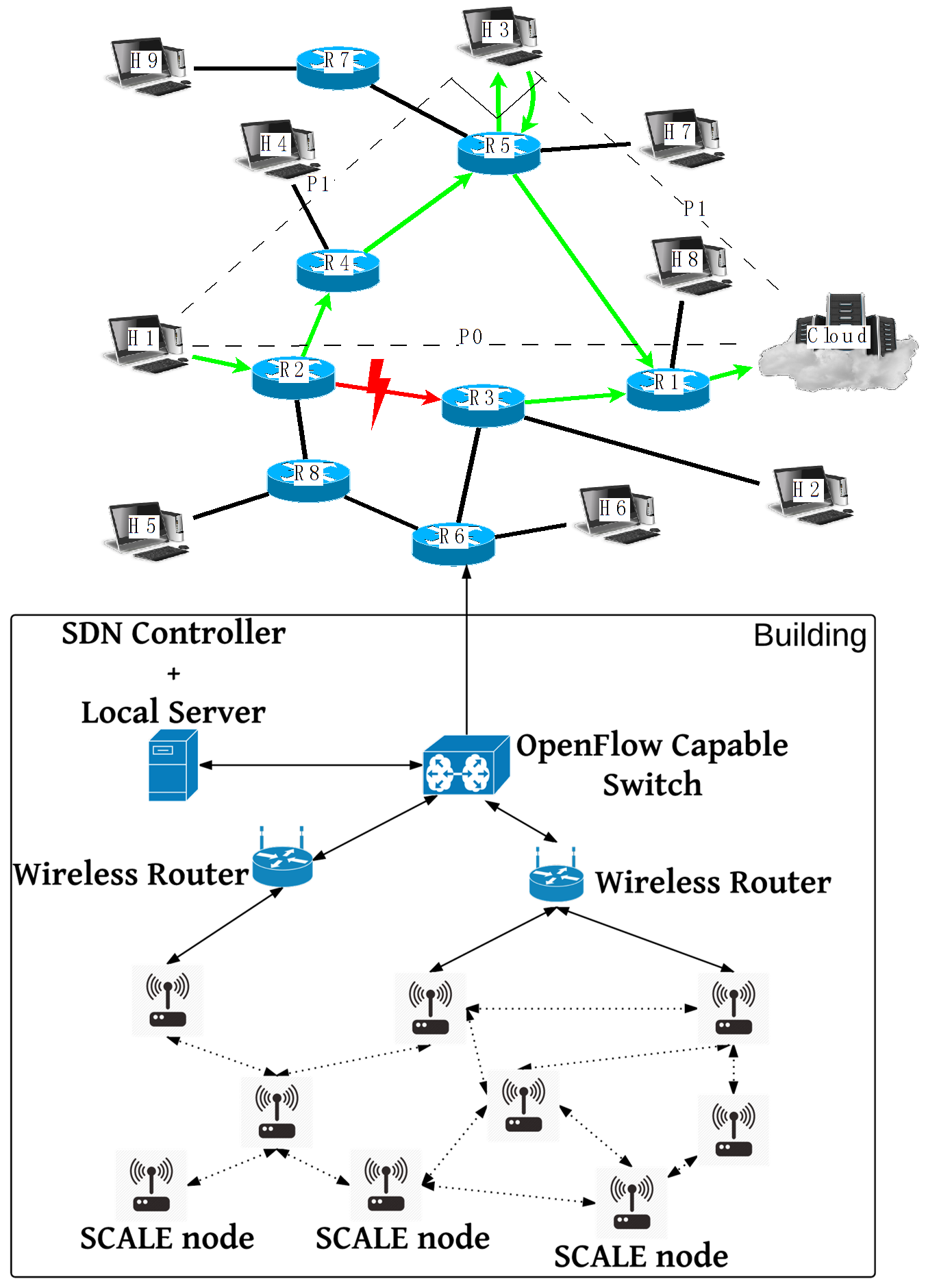

Enabling SDN based management of SCALE2 networks

In an another management effort in SCALE2, we propose a network-aware IoT edge service architecture that utilizes Software-defined Networking (SDN) and edge computing concept to improve reliability and performance of current cloud-based IoT applications. As a proof of concept, a prototype upon the proposed architecture has also been implemented in one of our local lab networks using SDN controller and switch hardware from Extreme Networks. Most of the applications running on SCALE platform are cloud-based IoT applications. While they benefit from all the advantages that cloud service gives, they also suffer from several drawbacks. Two major issues are i) the outage of our cloud service and ii) the failure of link between local network and cloud server. To solve outage of cloud server, one intuitive solution is to add edge servers in the local network. Such a hierarchical 3 tier approach, can break the simplicity of client program design that a cloud-based IoT service architecture promises. Eventually, the goal is to enable service capabilities exist in the edge network - the ability to execute services at the edge and cloud keeps the client program design simple and facilitates easy scaling of the system.

To meet the above mentioned requirements, we are developing a network-aware IoT edge service architecture utilizing the SDN philosophy. The conventional IoT service includes two parts: IoT client devices that are deployed in local environment and the cloud server. The IoT client devices are connected to local network and further communicate with cloud server through the Internet. Our proposed architecture adds/changes two components to this current scheme. First, it replaces the local network switches with SDN enabled switches and connects switches to a central SDN controller. The SDN controller is able to change the flow entries and query the statistics on these switches by southbound protocols like OpenFlow while exposing the northbound APIs to authorized users or applications. Second, for each cloud-based IoT service which is planned to be enhanced with network-aware IoT edge service, a corresponding edge server capable of calling SDN controllers APIs is deployed in the local network.

This proposed network-aware IoT edge service runs as an “Observe-Analyze-Act” (OAA) loop. In the “observe” phase, the edge server measures both local network condition and cloud link condition by running a network performance testing tool that queries for local network statistics from SDN controller. According to the network statistics gathered, in the “analyze” phase, the edge server decides if the current networks meet the requirements that the IoT service needs, and then triggers the proper actions in the “act” phase to help improve the service. To verify the idea of proposed architecture, we implemented the prototype system with the OpenFlow-capable switch(Summit x440p) supported by Extreme Networks. The gateway switch in our lab is replaced by this Extreme Network switch that is managed by an OpenDaylight SDN controller with OpenFlow protocol. An edge server, which runs the same services as SCALE server does, was implemented and deployed in our lab network. The experiment shows that, without modifying the SCALE client nodes in local network, the sensing data will be redirected to the edge server within 3-5 seconds in the case that the cloud service outage happens.

In the coming year, we plan to explore and develop algorithms/techniques to determine when to switch from edge to cloud mode to maximize application flow objectives. This will require further investigation on what other specific information must be observed by the SDN mechanisms to help make improved flow control decisions. Using measurement studies on the testbed, we will develop methods to improve application flow handling overheads to meet critical IoT application timing needs. Extensions to specific access network technologies are also being explored through collaborative research with Prof. Cheng-Hsin Hsu and his research group from National Tsing Hua University (NTHU)- an example is to explore improving the devices’ mobility in a software-defined Wi-Fi network.

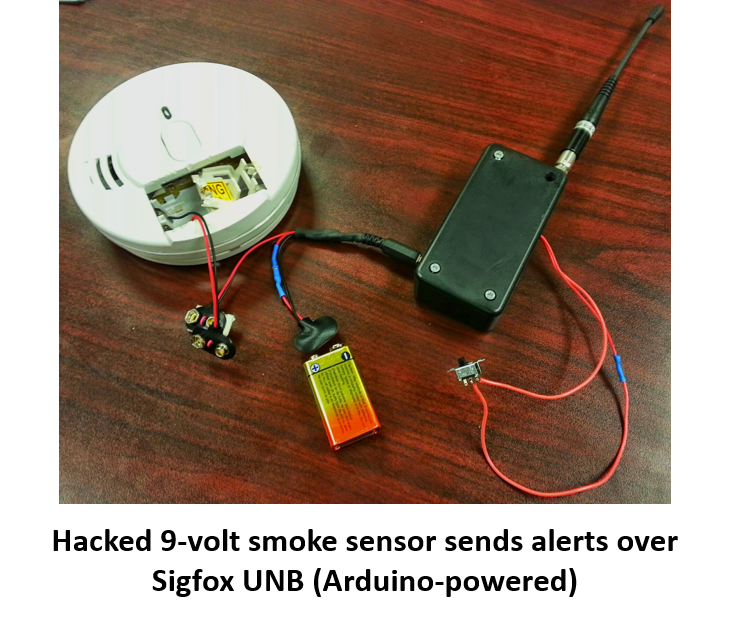

Extending the SCALE2 platform to handle multiple communication technologies

A range of communication technologies have been brought together to operate simultaneously and reliably in the SCALE2 IoT multinetworking architecture. This year, we improved the ability to incorporate and integrate various wireless networking into the SCALE2 platform including Wi-Fi, Bluetooth, Ultra-narrowband and ZigBee. In particular, we focused on utilizing 3G due to its ability to support outdoor deployment (see the EnviroSCALE deployment below for specifics) and its pervasive availability in global settings/deployments. Our experiences were reported in an invited paper on SCALE2 at SMARTCOMP 2016. In ongoing and future work, we plan to design dynamic access selection technologies across multiple wireless technologies based on scenario constraints and access network characteristics to enable reliable IoT data collection and control.

Supporting IoT Data Collection on Budgeted 3G Data Connections

We designed and implemented an IoT-based environment sensing platform leveraging SCALE, called EnviroSCALE, for measuring air quality in developing cities. EnviroSCALE is deployed in Dhaka, the capital of Bangladesh. The field device of EnviroSCALE consists of a set of air quality sensors that periodically sense the environment and upload the sensed data to a backend server or to the cloud. Since the developing cities do not have widespread free public WiFi networks, we explored issues in utilizing cellular connectivity (e.g., GPRS or 3G) connections, which are available in many such cities. Unlike Wifi, cellular connections are, expensive and metered; hence, the periodic sensing intervals at which the data is being gathered should be set in such a manner that the data generation and upload rate do not exceed the constrained data budget. We explored infrastructure and information level techniques for addressing such communication limitations. We adjust sampling intervals of sensors for accommodating with limited data budget (infrastructure-level adaptation). We refer to this problem as the rate limited sensing and uploading and propose solutions for necessary adaptations. Techniques for choosing sensing intervals have been designed based on the degree of variation so that the sum of data rates from all sensors remains within a given data budget. We also do not use verbose data exchange protocols such as JSON while uploading data over MQTT; instead, we use binary encoding to reduce data volume of individual sensor readings. We built a prototype system with a set air quality sensors (MQ2, MQ4, MQ6, MQ135), temperature and humidity sensors (DHT11), and a GPS receiver on board, and deployed in Dhaka for air quality monitoring for an extended period of time using a cellular (3G) connection on a weekly subscribed data plan obtained from a local telecom operator.

We also explored the use of Information level adaptation techniques that utilize on-board analytics for event extraction (from raw data). We extend the EnviroSCALE platform by introducing containers technologies to run application specific analytics on the device where sensor data is being collected - e.g. Docker containers for packaging rich media analytics with software dependencies and Kubernetes for provisioning IoT devices and deploying Docker images. This is particularly important when multimedia sensors such as audio and video cameras are involved. Here, instead of sending raw media data (large), on-board media analytics extract useful intermediate representations from the media content, e.g. feature vectors for image detection/recognition. Since these analytic requirements are application specific and can be dynamically needed/modified based on the problem at hand, the container-based architecture enables on-demand deployment and activation of specific media analytic modules. We plan to explore more adaptive triggering of analytics only when an event is detected by the always-on sensors, for example activating the camera only when smoke is detected inside a room (running analytics all the time would be expensive otherwise). To limit data communication overhead over 3G with limited data plans, the Docker images are preloaded in the board but a small footprint of analytic code is transferred whenever required. Our initial experiment results demonstrate the practicality of triggering rich sensing analytics via user-specified criteria, even over constrained data connections. The system, deployment experiences in Dhaka and algorithms for adaptation have resulted in multiple publications including a demo paper; a workshop paper in ACM Middleware addresses how adaptation techniques, our initial efforts in using container technologies have been accepted for publication at IEEE CloudCom 2017 and a detailed conference paper is in progress as well.

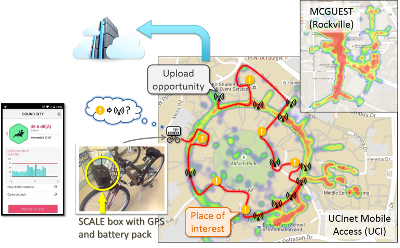

Incorporating mobile sensing and data collection in SCALE2

We found community IoT systems and applications are still limited due to the intermittent and varying coverage of sensing/communication infrastructures. First, IoT systems depend heavily on Internet infrastructures, which are not uniformly and continuously available or accessible. They can be congested or damaged in large disruptive events such as disasters. It is often in such situations when dynamic information from impacted regions can be very useful. Second, due to limitations of regions, terrains, and infrastructure availability, providing complete coverage with sensing capacity can be costly and infeasible. Additionally, some forms of sensed information may only be useful in rare events (e.g. chemical release during fires) – custom deployments for such rare events are not cost-effective. Therefore, we believe that intuitive and flexible approaches for instrumentation/deployment based on need are essential to enhancing the resilience of existing IoT systems, where one promising solution is to deploy mobile agents/entities to augment in-situ deployments to provide more complete, timely, and accurate coverage of events/phenomena (e.g. disaster damage).

An interesting question in the context of mobile data collection is to ensure timely upload of gathered information in intermittent network coverage to cloud platforms, where deeper analysis can be conducted. We formalized this problem as an NP-hard constrained optimization, designed a two-phase approach combined with static planning and dynamic adaptation, and proposed algorithms/policies for both phases.

To validate the network availability issues, conduct measurements on real testbeds, and augment the SCALE2 deployments with mobility, we developed and prototyped SCALECycle, a mobile sensing and data collection platform, based on SCALE. The current SCALECycle mobile data collector (MDC) prototype is equipped with a USB battery pack for power supply, a Bluetooth GPS for locating and geo-tagging, and a TGS-2600 air contaminant sensor for air-quality sensing. It also uses its Wi-Fi dongle for Wi-Fi RSSI heat-mapping. The platform can seat on a bicycle as is suggested by the name of SCALECycle, or just stay in a backpack. With the SCALECycle MDC, we conducted measurements on UCI campus and around Victory Court Senior Apartments and Thingstitute in Montgomery County, MD. The data we collected in those real settings have revealed the heterogeneity in community IoT deployments and network infrastructures, and validated the motivation and several assumptions we made in our related research problems.

The efficient mobile data collection research resulted in the publication “Upload Planning for Mobile Data Collection in Smart Community Internet-of-Things Deployments”. In the coming year, we will extend the mobile data collection planning and upload problem to a multi-timescale setting with heterogeneous and multimedia workloads. We also plan to consider a range of access networks and cost/benefit tradeoffs in this context.

Enabling Perpetual Personal Sensing in SCALE2

In the last year, we have expanded our work on personal IoT awareness systems. Our work in this direction includes:

(a) An ambient system that observes the status of multi-connected sensors deployed on the ground to allow immediate assistance by detecting different activities of daily living (ADLs); then, contacting and sending alerts to healthcare contacts when a fall is detected. We implemented a 4 by 6 feet prototype using (FSR) pressure sensors in our lab. We deployed the sensors on the ground on a balanced sensors distribution structure based on statistics of human body dimensions. Then, we gathered and processed the sensors’ readings on-the-fly using a procedure that inspired by the Connected-components labeling algorithm and Union-find data structure. The preliminary evaluation showed that there is lack in reliability due to node failure. New resilient data collection methods in this setting are being developed.

(b) A wearable system that integrates a sensor tag and mobile phone to capture anomalous events (e.g. fall detection methods). Such as system develops a virtual sensor abstraction by observing information from multiple sensors ( accelerometer, gyroscope) to detect a host of daily activities and anomalous events (e.g. falls). We measure the energy cost of running a fall detection algorithm using the accelerometer data from different sources. We focus on testing the energy consumption when the system is actively running as a background service. Using a Monsoon Power Monitor, we measured two approaches for the fall application to receive accelerometer data. The first test uses Nexus 4's built in the accelerometer sensor. The second test, use a CC 2541 Ti Tag sensor to send accelerometer data, via the Bluetooth Low Energy (BLE) protocol, to the Nexus 4's fall detection application. The results show that the BLE approach reduces the energy consumption, with the 50% improvement in power usage, compared to the on chip accelerometer. Even though the accelerometer in the Sensor tag consumes less power, the Sensor Tag also runs on a battery, which means it's communication with the Android device is also limited by its constant Bluetooth transmission and sensor usage.

While each system proves level of accuracy, they also suffer from several drawbacks. The ambient system suffer from reliability, and the wearable system suffered from power consumption. Therefore, combining multi sensors can enhance the performance under real-life conditions by improving the accuracy, reliability, usability, and power consumption.

Exploring the role of community input via social media information extraction

Social media is often an important source of awareness related information in public safety scenarios and can be used to both trigger more in-depth data collection and improve reliability of information extraction, masking inaccuracies and variabilities in IoT devices. To enable the integration of community related information captured in social media, Twitter in particular, we have started to explore the integration of a Tweet Acquisition System (TAS) to gather and extract key information from Twitter social media streams into SCALE2. The basic idea is to use an initial set of phrases/terms of relevance to a topic of interest that local government officials could use. Such phrases could either be specified by the user and/or learnt through available ontologies, knowledge bases or topic focused content from other sources (e.g., news stories). At any instance, TAS maintains an estimate of the relative degree of importance for each phrase and uses such a model to dynamically ascertain the relevance of the incoming tweets collected to the topic of interest. The relevance measure incorporates also features about the tweeter (i.e., the person who posted the tweet), as well as, the statistics of pattern co-occurrences. TAS follows an iterative process with the objective of maximizing the recall by automatically changing the query and updating the set of phrases representing the topic of interest. The basic intuition is that the “current” patterns trending in relevant tweets can help retrieve more relevant tweets in the “near” future and furthermore “repetitive cooccurrence” of terms can help identify additional patterns that may empower the acquisition system. TAS uses a reinforcement learning algorithm to adaptively select a set of phrases that strike a balance between exploring the importance of different phrases and that of exploiting the phrases it has determined to be of high retrieval quality. Our initial experimental analysis has shown that TAS performs very significantly better compared to competing alternatives when it comes to retrieving tweets for specific topics. Also, even though TAS relies on the initial representation of the topic of interest provided by the client/application, it dynamically updates the representation by leveraging the relevant patterns in the previously acquired tweets making it robust to incomplete and/or suboptimal specification of the initial query. Given the above nature of TAS we believe it is ideally suited for collecting dynamic event-specific tweets of relevance in our envisioned situations where our interest are to retrieve tweets to better understand during ongoing events.

This web page was last updated on Tuesday, December 19, 2017.

Webmaster: Qiuxi (Charles) Zhu <qiuxiz (-AT-) ics (-DOT-) uci (-DOT-) edu>